Blog

Connecting to GridDB Cloud v3.2 from Your Local Dev Environment (No VPN, No VNet Peering)

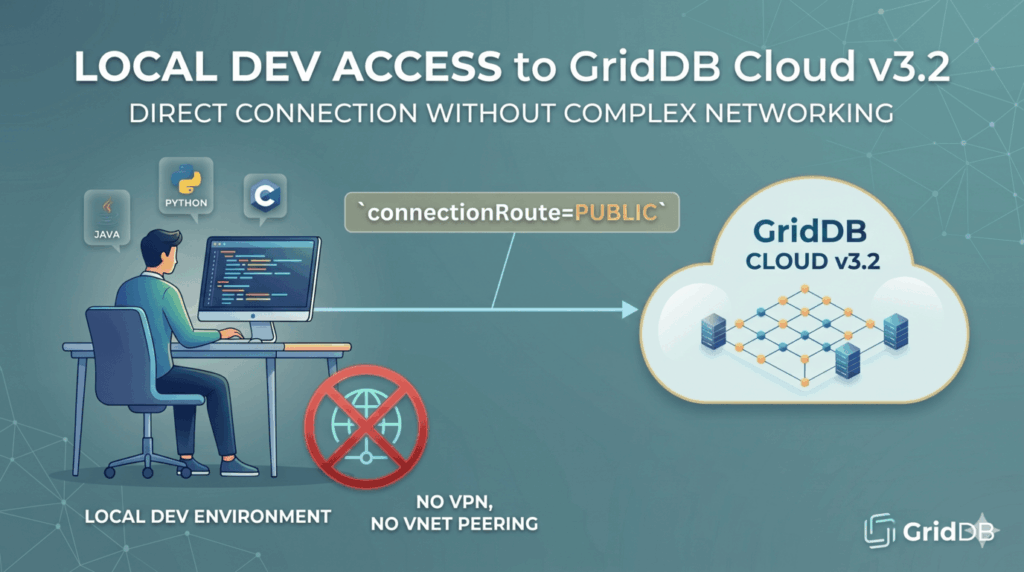

With the release of GridDB Cloud v3.2, we now get the ability to connect to GridDB Cloud from your local machine using the native NoSQL

With the release of GridDB Cloud v3.2, we now get the ability to connect to GridDB Cloud from your local machine using the native NoSQL clients (Java, Python, etc.) — without having to spin up a VNet peering, without configuring a VPN, and without needing to use the Web API. If you’ve followed along with our previous blogs covering the Azure Marketplace signup, the VNet peering setup, or the various Azure Connected Services integrations, you know that getting the native API working from outside the cloud used to be a bit tricky. You either had to host your code inside an Azure VNet peered to GridDB Cloud, set up a VPN to tunnel in, or resign yourself to the Web API. For local dev iteration, none of those options were super easy. With v3.2, that changes. You can now point your local Python or Java client directly at your cloud instance over the public internet, authenticate, and run queries. You can follow the official quick start guide but I will summarize and give some helpful tips that worked for me. GridDB Cloud v3.2 is available via the Azure Marketplace. If you haven’t signed up yet, grab either the Pay-As-You-Go plan or the Fixed Monthly plan. Our Azure Marketplace signup blog walks through the whole process. The Checklist Here’s the short version of what you need to do, in order. I’ll go deeper on each step below. First, we need to prepare our environment. Preparation Generate your notification provider URL from the Cloud dashboard before whitelisting Whitelist your local machine’s IP in the GridDB access area Download the EE-only library jars from the Cloud help page (they’re not on Maven) Extract gridstore-advanced.jar from the RPM if you’re not on Rocky Linux Install the Python client Add all the jars — including gridstore-advanced — to your CLASSPATH Append connectionRoute=PUBLIC to your connection details That last one is the whole reason this works. Without it, the client tries to connect over the private route and will timeout. 1. Generate the Notification Provider URL (Do This First!) Before you do anything else in the Cloud dashboard, head to the cluster settings and manually generate the notification provider URL. If you whitelist your IP first, it will fail with a strange warning. Save that URL somewhere — you’ll need it for your connection string. 2. Whitelist Your IP Now go to the access control area of the Cloud dashboard and add your local machine’s public IP to the whitelist. If you click ‘Add my IP’, it will automatically add your curren’t machine’s public IP Address. 3. Download the EE Library Files Head into cloud dashboard’s help/downloads/support section and grab the Enterprise Edition library bundle labeled as: GridDB Cloud Library and Plugin download . These jars are not available on Maven Central or anywhere else — they ship exclusively with the EE build of GridDB, which is what the Cloud runs on. You need these to be able to make SSL connections to the cloud. 4. Extract gridstore-advanced.jar from the RPM The EE download is distributed as an RPM. If you’re running Rocky Linux (or any RHEL-compatible distro), you can install it normally. But if you’re on Ubuntu, Debian, or pretty much anything else, you need to manually crack the RPM open to pull the jar out: $ rpm2cpio griddb-ee-java-lib-5.9.0-linux.x86_64.rpm | cpio -idmv This drops the contents into your current directory. The jar you want is gridstore-advanced.jar — it lives inside usr/share/java/ or similar after extraction. Without this jar on your classpath, your SSL handshake to the cloud will fail. 5. Install the Python Client Standard Python client install — nothing new here. Follow the official Python client getting started guide for the full walkthrough (install Java, clone the python_client repo, mvn install, then pip install .). 6. Add Everything to CLASSPATH Once you have all your jars in one place (gridstore.jar, gridstore-jdbc.jar, gridstore-arrow.jar, arrow-memory-netty.jar, and critically gridstore-advanced.jar), export your CLASSPATH: $ export CLASSPATH=/path/to/lib/gridstore.jar:/path/to/lib/gridstore-jdbc.jar:/path/to/lib/gridstore-arrow.jar:/path/to/lib/arrow-memory-netty.jar:/path/to/lib/gridstore-advanced.jar If gridstore-advanced.jar isn’t on this path, the connection will fail. 7. Add connectionRoute=PUBLIC This is the magic parameter that tells the client to use the new public route introduced in v3.2. In your Python code, your factory config should include it: self.gridstore = None try: self.gridstore = GridDB.factory.get_store( notification_provider=self.notification_provider, cluster_name=self.cluster_name, username=self.username, password=self.password, database=self.database, connection_route='PUBLIC' #NOTE, PUBLIC must be in ALL CAPS ) print(f"Successfully connected to {self.cluster_name}.") except Exception as e: print(f"Failed to connect to GridDB: {e}") Without this, the client will try to use the internal route and you’ll be stuck waiting. Python Example With all the pieces in place, here’s what a basic connect-and-query looks like from your local machine: $ (venv) israel@griddb:~/development/griddb-university/python$ export CLASSPATH=$CLASSPATH:./gridstore.jar:./gridstore-arrow.jar:./arrow-memory-netty.jar:./gridstore-advanced.jar $ (venv) israel@griddb:~/development/griddb-university/python$ export GRIDDB_NOTIFICATION_PROVIDER="URL" $ export GRIDDB_CLUSTER_NAME="gs_clustermfcloud87" $ export GRIDDB_USERNAME="admin" $ export GRIDDB_PASSWORD="password" $ export GRIDDB_DATABASE="nSt" $ (venv) israel@griddb:~/development/griddb-university/python$ python3 main.py $ JVM already started. $ Attempting to connect to GridDB… $ Successfully connected to gs_clustermfcloud8737. $ Successfully created TimeSeries: SamplePython_timeseries1 $ Successfully put row into SamplePython_timeseries1: [datetime.datetime(2025, 10, 1, 15, 0, tzinfo=datetime.timezone.utc), 10.21] $ — Reading from SamplePython_timeseries1 — $ [datetime.datetime(2025, 10, 1, 15, 0), 10.21] That’s it. No Azure Function wrapping, no container, no VPN client running in the background; just your script, talking directly to GridDB Cloud. The full sample python source code along with Java sample code is included with this article. Java from Your Local Machine As java is the native interface for GridDB, let’s also take a look at connecting via Java. The steps are largely the same, including the adding the new connectionRoute property and having the special library for making SSL requests to GridDB Cloud. Gotcha #1: URL-encode the Notification Provider Value When you pass the notification provider URL into Java’s GridStoreFactory, you need to URL-encode the value. If you don’t, Java’s property parser will see the &connectionRoute=PUBLIC portion as a separate parameter and silently drop it — and you’ll be left wondering why your connection is timing out even though everything looks right. The fix is to encode the full URL before passing it in: String notificationProvider = URLEncoder.encode( "https://<your-provider-url>?clusterName=<name>&connectionRoute=PUBLIC", StandardCharsets.UTF_8.toString() ); Gotcha #2: Manually Install gridstore-advanced.jar to Your Local Maven Repo Same jar as before, same reason — not on Maven Central. To use it with Maven, you have to install it to your local .m2 repository manually: $ mvn install:install-file \ $ -Dfile=/path/to/your/python/gridstore-advanced.jar \ $ -DgroupId=com.github.griddb \ $ -DartifactId=gridstore-advanced \ $ -Dversion=5.9.0 \ $ -Dpackaging=jar Then add it as a dependency in your pom.xml: <dependency> <groupId>com.github.griddb</groupId> <artifactId>gridstore-advanced</artifactId> <version>5.9.0</version> </dependency> Now Maven will resolve it like any other dependency when you build your project. Java Example $ (venv) israel@griddb:~/development/griddb-university/java$ java -jar target/java-samples-1.0-SNAPSHOT-jar-with-dependencies.jar $ jdbc:gs:///gs_clustermfcloud8737/nl7QftSt?notificationProvider=https%3A%2F%2Fdbaasshare&connectionRoute=PUBLIC $ CREATE TABLE IF NOT EXISTS exampleJdbc (id integer, value string); $ INSERT INTO exampleJdbc values (0, 'test0'),(1, 'test1'),(2, 'test2'),(3, 'test3'),(4, 'test4') $ SELECT * FROM exampleJdbc $ id value 0 test0 1 test1 2 test2 3 test3 4 test4 0 test0 1 test1 2 test2 3 test3 4 test4 $ Running SQL: SELECT ts, AVG(temp) as avg_temp FROM device WHERE ts BETWEEN TIMESTAMP('2020-07-12T00:01:20Z') AND TIMESTAMP('2020-07-12T00:14:00Z') GROUP BY RANGE (ts) EVERY(20, SECOND) $ java.sql.SQLException: [280005:SQL_DDL_TABLE_NOT_EXISTS] Parse SQL failed, reason = GET TABLE failed. (reason=GET TABLE failed. (reason=Specified table 'device' is not found)) on executing query (sql="SELECT ts, AVG(temp) as avg_temp FROM device WHERE ts BETWEEN TIMESTAMP('2020-07-12T00:01:20Z') AND TIMESTAMP('2020-07-12T00:14:00Z') GROUP BY RANGE (ts) EVERY(20, SECOND) ") (db='nl7QftSt') (user='S01K7vrCuF-israel') (clientId='c761d357-ec46-4e5c-8f80-405e69635810:4') (source={clientId=155, address=172.22.5.69:46422}) (connection=PUBLIC) (address=20.205.145.126:20001, partitionId=8289) $ Testing GridDB NoSQL $ Creating Container And again, the sample code will be shared here. In this case, we are connecting to GridDB Cloud via the NoSQL interface AND the SQL interface through JDBC. Both work here once the above steps are adhered to. C Client Please note, that if you would like to use the C Client, you will also need to follow the same procedure as the Java code but for the C Client. That is, you will need to include the ‘public route’ to your connection details and will need to extract the .rpm called griddb-ee-c-lib-5.8.0-linux.x86_64.rpm (assuming you are not using CentOS/Rocky Linux) and grab the library files libgridstore.so.0.0.0 and libgridstore_advanced.so.0.0.0 and the header (gridstore.h). Once you have those in place, add the public route to your connection details const GSPropertyEntry props[] = { { "notificationProvider", "https://<url-encoded-provider-url>" }, { "clusterName", "<your cluster name>" }, { "database", "public" }, { "user", "<user>" }, { "password", "<password>" }, { "sslMode", "PREFERRED" }, { "connectionRoute", "PUBLIC" } }; And you should be good to go for the C Client as well! Conclusion Being able to hit GridDB Cloud directly from your local dev machine is a genuinely big deal for iteration speed. If you haven’t signed up for GridDB Cloud v3.2 yet, it’s exclusively on the Azure Marketplace — you can grab the Pay-As-You-Go plan

This tutorial shows how to generate evolving ambient music driven by IoT sensor data. We’ll ingest sensor readings into GridDB database, map those readings to musical parameters using OpenAI, and call ElevenLabs Music to render an audio track. The UI is built with React + Vite, and the backend is Node.js. Introduction Ambient music thrives on context. Here, the environment literally composes the score. Heat can slow the tempo, humidity can soften the timbre, and human presence can thicken the arrangement. We’ll stitch together a small system: devices post telemetry (we will use the data directly), GridDB keeps the data, the AI model creates music parameters, and ElevenLabs will render audio that you can play instantly in the browser. System Architecture The system has several core components working together to turn IoT data into ambient sound: IoT Data Source Environmental sensors capture values such as temperature, humidity, sound levels, and occupancy. These readings are the raw input for the music generation process. Node.js Backend Node.js acts as the central orchestrator. It receives IoT sensor readings and coordinates interactions between the AI models, the music generator, and the database. OpenAI Model The IoT data is processed by an OpenAI model. The model transforms the data into a musical prompt. For example, “calm ambient soundscape with airy textures and slow tempo.” This ensures the music reflects the current environment in a more human-like, descriptive way. ElevenLabs Music API The generated music prompt is sent to the ElevenLabs Music API. ElevenLabs then produces an audio track that matches the description. The result is ambient audio that adapts to real-world conditions. GridDB Database Both the music prompt and the audio metadata (such as file path or data URL) are stored in GridDB. GridDB also keeps the original IoT readings. React + Vite Frontend The frontend provides a web-based interface where users can trigger new music generation, view sensor snapshots, and play the most recent ambient tracks. Prerequisites Node.js This project is built using React + Vite, which requires Node.js version 16 or higher. You can download and install Node.js from https://nodejs.org/en. OpenAI Create the OpenAI API key here. You may need to create a project and enable a few models. In this project, we will use an AI model from OpenAI: gpt-5-mini to create an audio prompt. GridDB Sign Up for GridDB Cloud Free Plan If you would like to sign up for a GridDB Cloud Free instance, you can do so at the following link: https://form.ict-toshiba.jp/download_form_griddb_cloud_freeplan_e. After successfully signing up, you will receive a free instance along with the necessary details to access the GridDB Cloud Management GUI, including the GridDB Cloud Portal URL, Contract ID, Login, and Password. GridDB WebAPI URL Go to the GridDB Cloud Portal and copy the WebAPI URL from the Clusters section. It should look like this: GridDB Username and Password Go to the GridDB Users section of the GridDB Cloud portal and create or copy the username for GRIDDB_USERNAME. The password is set when the user is created for the first time. Use this as the GRIDDB_PASSWORD. For more details, to get started with GridDB Cloud, please follow this quick start guide. IP Whitelist When running this project, please ensure that the IP address where the project is running is whitelisted. Failure to do so will result in a 403 status code or forbidden access. You can use a website like What Is My IP Address to find your public IP address. To whitelist the IP, go to the GridDB Cloud Admin and navigate to the Network Access menu. ElevenLabs You need an ElevenLabs account and API key to use this project. You can sign up for an account at https://elevenlabs.io/signup. After signing up, go to the Developer section, and create and copy your API key. And make sure to enable the Music Generation access permission. How to Run 1. Clone the repository Clone the repository from https://github.com/junwatu/grid-sound-ambient to your local machine. $ git clone https://github.com/junwatu/grid-sound-ambient.git $ cd grid-sound-ambient $ cd apps 2. Install dependencies Install all project dependencies using npm. $ npm install 3. Set up environment variables Copy file .env.example to .env and fill in the values: # Copy this file to .env.local and add your actual API keys # Never commit .env.local to version control # ElevenLabs API Key for ElevenLabs Music ELEVENLABS_API_KEY= OPENAI_API_KEY= GRIDDB_WEBAPI_URL= GRIDDB_PASSWORD= GRIDDB_USERNAME= WEB_URL=http://localhost:3000 Please look at the section on Prerequisites before running the project. 4. Run the project Run the project using the following command: $ npm run start 5. Open the application Open the application in your browser at http://localhost:3000 or any address that WEB_URL is set to. You also need to allow the browser to access your microphone. Building The Ambient Music Generator IoT Data In this project, we will use pre-made IoT data. The data is an array of sensor snapshots. Each object is a single time-stamped reading for a building zone. This data mimics real data conditions from the IoT sensor. [ { "timestamp": "2025-08-20T09:15:00", "zone": "Meeting Room A", "temperature_c": 22.8, "humidity_pct": 47, "co2_ppm": 1020, "voc_index": 185, "occupancy": 7, "noise_dba": 49, "productivity_score": 65, "trend_10min.co2_ppm_delta": 120, "trend_10min.noise_dba_delta": 1, "trend_10min.productivity_delta": -5 }, … ] You can look at the data sample in the apps/data/iot_music_samples.json. User Interface The UI is a small React app (Vite + Tailwind) that drives the end‑to‑end flow and plays generated audio. The workflow for the user is: Click the Load example button to load sensor data into the text input, or you can paste a single sensor snapshot JSON into the textarea from the apps/data/iot_music_samples.json file. Click “Generate Music” to call. The app displays the generated prompt, a brief (expandable) description, and an HTML5 audio player. Optionally, you can open “View History” to fetch recent records and replay saved tracks. These are the server routes used by the client-side UI: | Method & Route | Trigger in UI | Purpose Consumes | |—————————-|—————————————-|———————————————- | POST /api/iot/generate-music | Generate Music button | Full pipeline: brief → prompt → music → save | GET /api/music/history | View History modal | Load saved generations | GET /audio/ | Audio players in results/history | Stream ambient music from server The client data returned from the server is JSON. It contains all the data needed for the UI, from music prompt, music brief, to audio metadata such as audio path and filename. One thing to note here is that the OpenAI model is being used to generate music brief AND the music prompt. What’s the difference? Please, read the next section. Result UI Other than user input for IoT data snapshot, after successfully generating ambient music, the result user interface will render: Generated music prompt (+ Music bried details) Music player, it’s information, and the download link. History UI When the user clicks the View History button, the app changes state to display all generated music, associated metadata, simplified IoT data, music briefs, and prompts. Generate Music Prompt Music Brief This project generates a music brief before the final prompt to provide flexibility and a clear separation of concerns. The brief normalizes noisy IoT data into structured parameters, and the same brief can be reused with other (including non‑OpenAI) models without changing the mapping, making it robust for real‑world conditions. Here is an example of the music brief: { "mood": "soothing", "energy": 48, "tension": 30, "bpm": [ 50, 64 ], "duration_sec": 60, "loopable": true, "key_suggestion": "A minor", "instrument_focus": [ "warm pads", "soft piano", "breathy synth", "warm low strings", "subtle low percussion" ], "texture_notes": "Airy, sparse texture with warm low mids and a gentle high-frequency roll-off to avoid brightness.", "rationale": "CO2 >1000 ppm and rising calls for lower-energy, soothing airiness; occupancy is low and temp/humidity are ideal, so use sparse warm timbres and minimal rhythmic drive to reduce stress." } Music brief generation is handled by generateMusicBrief(sensorSnapshot). It takes a single IoT sensor snapshot and uses the OpenAI model gpt-5-mini to produce the brief. The full code can be found in the lib\openai.ts file. The important part of the code is the AI system prompt: const systemPrompt = ` You are an assistant that converts building sensor snapshots into a concise “music brief” for an ambient soundtrack generator. Return ONLY compact JSON with these fields: { "mood": "calm|focused|energizing|soothing|alert|uplifting|neutral", "energy": 0-100, "tension": 0-100, "bpm": [low, high], "duration_sec": number, "loopable": true|false, "key_suggestion": "A minor|D minor|C major|… (optional)", "instrument_focus": ["pads","soft piano","light percussion", …], "texture_notes": "short sentence on space/density/brightness", "rationale": "1–2 sentences mapping readings→choice" } Decision rules: – High CO2 (>1000 ppm) or high VOC (>200) → lower energy (35–55), soothing/airiness to reduce stress; avoid bright highs. – High occupancy (>25) with good air (CO2 < 800) → moderate energy (55–70) and gentle momentum; keep distractions low (no sharp transients). – High noise (>60 dBA) → simpler textures, fewer rhythmic accents; tighten BPM range. – Productivity_score < 60 → light uplift (energy +10), but stay minimal. – Temperature 22–24°C & humidity 45–55% is ideal; if outside, reduce tension slightly and favor warm timbres. Prefer keys: minor for calming/focus, major for uplifting. Keep outputs steady and minimal; no reactivity to single-sample spikes—assume 10–15 min trend. `; This system prompts the behaviour of the model AI to create a music brief with a pre-defined data structure using decision rules. If you want to enhance this project, this is the crucial part where you can adjust the decision rules to your requirements. Music Prompt The music prompt is generated using the generateMusicPrompt(musicBrief) function. This function will call the OpenAI model gpt-5-mini to generate a music prompt based on the music brief input. const response = await openai.responses.create({ model: "gpt-5-mini", input: [ { role: "developer", content: [{ type: "input_text", text: systemPrompt }] }, { role: "user", content: [{ type: "input_text", text: JSON.stringify(brief, null, 2) }] }, ], text: { format: { type: "text" }, verbosity: "medium" }, reasoning: { effort: "medium", summary: "auto" }, store: false, } as any); What’s important here is the system prompt that is set in the AI model. const systemPrompt = ` You convert an internal JSON "music brief" into a concise prompt for a generative music API. Rules: – Output 3–5 short lines, max ~450 characters total. – No meta commentary, no JSON, no emojis. – Include: mood, energy/tension, BPM range, duration, loopable flag, (optional) key, instruments, texture, goal. – Avoid sharp/bright transients when asked; keep language precise and production-safe. – Never invent values not present in the brief; default only when missing. Example: "Ambient track for a focused open office. Mood: focused, energy 62/100, tension 35/100. Tempo: 84–92 BPM, loopable, ~240s. Key: D minor. Instruments: warm pads, soft piano, light shaker, subtle bass. Texture: low-density, gentle movement, softened highs; avoid sharp transients and bright cymbals. Goal: steady momentum that supports concentration without masking speech." `; Again, you can customize this system prompt to meet any of your custom project requirements before feeding it to music generation. The full source code for music prompt generation is in the libs\openai.ts file. This is an example of the generated music prompt: "Calm. Energy 60/100, tension 25/100.\nTempo: 58–64 BPM, duration ~60s, loopable. Key: A minor.\nInstruments: warm pads, soft electric piano, subtle low bass, minimal brushed percussion.\nTexture: sparse, warm, low‑mid focused with airy pads and subdued transients; avoid sharp/bright transients to prevent masking ambient noise. Goal: gentle uplift and comfort without masking background." Generate Ambient Music After the music brief and music prompt generation, the next step is to generate the ambient music. This workflow handled by the composeMusic() function (full source code in the libs\elevenlabs.ts file): export async function composeMusic({ prompt, music_length_ms = 60000, model_id = "music_v1", apiKey = process.env.ELEVENLABS_API_KEY, }: ComposeParams): Promise<ArrayBuffer> { if (!apiKey) { throw new Error('ElevenLabs API key not configured'); } const response = await fetch("https://api.elevenlabs.io/v1/music", { method: "POST", headers: { "xi-api-key": apiKey, "Content-Type": "application/json", }, body: JSON.stringify({ prompt, music_length_ms, model_id }), }); if (!response.ok) { const errorText = await response.text(); const err: any = new Error(`ElevenLabs API error: ${errorText}`); err.status = response.status; throw err; } return await response.arrayBuffer(); } Basically, the code will call ElevenLabs Music API, which uses the latest music_v1 model. However, in this project, the duration of the generated music is hardcoded to 60 seconds or 1 minute. You can edit this directly in the source code by changing the music_length_ms = 60000 code. The default audio output format from the ElevenLabs Music API is mp3_44100_128. The API also supports several other formats, for a complete list, refer to the official documentation. Database The container type used in this project is collection, and the schema for the data is defined by the interface MusicGenerationRecord code: export interface MusicGenerationRecord { timestamp: string; zone: string; temperature_c: number; humidity_pct: number; co2_ppm: number; voc_index: number; occupancy: number; noise_dba: number; productivity_score: number; trend_10min_co2_ppm_delta: number; trend_10min_noise_dba_delta: number; trend_10min_productivity_delta: number; music_brief: string; music_prompt: string; audio_path: string; audio_filename: string; music_length_ms: number; model_id: string; generation_timestamp: string; } And if you have access to the GridDB cloud dashboard, you will see these columns created based on the interface fields and their type. Save Data The save process will save the IoT data, music briefs, music prompts, audio path, audio filename, and timestamp on every successfull music generation and the function that responsible for this task is the saveMusicGeneration(musicRecord) function, initially it will check if the container music_generations exist or not and if exist than the data will be saved to the database. export async function saveMusicGeneration(record: MusicGenerationRecord): Promise<void> { if (!GRIDDB_CONFIG.griddbWebApiUrl) { console.warn('⚠️ GridDB not configured, skipping database save'); return; } await initGridDB(); try { const client = getGridDBClient(); const containerName = 'music_generations'; console.log(`💾 Saving music generation record for zone: ${record.zone}`); // Generate a unique ID that fits in INTEGER range (max 2,147,483,647) // Use a combination of current time modulo and random number const timeComponent = Date.now() % 1000000; // Last 6 digits of timestamp const randomComponent = Math.floor(Math.random() * 1000); // 3 digit random const id = timeComponent * 1000 + randomComponent; // Prepare the data object for insertion with proper date formatting const data = { id, timestamp: new Date(record.timestamp), zone: record.zone, temperature_c: record.temperature_c, humidity_pct: record.humidity_pct, co2_ppm: record.co2_ppm, voc_index: record.voc_index, occupancy: record.occupancy, noise_dba: record.noise_dba, productivity_score: record.productivity_score, trend_10min_co2_ppm_delta: record.trend_10min_co2_ppm_delta, trend_10min_noise_dba_delta: record.trend_10min_noise_dba_delta, trend_10min_productivity_delta: record.trend_10min_productivity_delta, music_brief: record.music_brief, music_prompt: record.music_prompt, audio_path: record.audio_path, audio_filename: record.audio_filename, music_length_ms: record.music_length_ms, model_id: record.model_id, generation_timestamp: new Date(record.generation_timestamp) }; // Use the fixed insert method that now handles schema-aware transformation await client.insert({ containerName, data: data }); console.log(`✅ Music generation record saved to GridDB (ID: ${id}, Zone: ${record.zone}, File: ${record.audio_filename})`); } catch (error) { console.error('❌ Failed to save music generation record:', error); throw error; } } Read Data To view the history of the music generations, it needs to read data from the database, and this task is internally handled by the getMusicGenerations() function. const client = getGridDBClient(); const containerName = 'music_generations'; console.log(`📊 Retrieving ${limit} music generation records from GridDB`); const results = await client.select({ containerName, orderBy: 'generation_timestamp', order: 'DESC', limit }); The client.select() function is basically a wrapper for SQL SELECT. For the full source code for this function, you can look in the libs\griddb.ts file. Node.js Server All backend functionality is handled by the Node.js server. It exposes a few routes that can be used by the client application or for manual API testing. Server Routes Method Path Purpose GET /api/health Health check with current timestamp POST /api/iot/generate-music Generate music based on the IoT data GET /api/music/history List past generations This server also has a function to save the generated music file into the local public/audio directory. It generates a clean MP3 filename using the generateAudioFilename(zone, timestamp) function and writes the audio buffer to apps/public/audio/. The function then returns the public URL path /audio/. The generated music is served as static files, so any /audio/.mp3 URL is directly accessible over HTTP. You can open these files directly in a browser. Further Enhancements This project is a simple prototype of what we can do using IoT, AI and the GridDB database. In the real scenario, you need to wire the app with real IoT sensor

As a continuation of our previous blog: GridDB IoT Hackathon Recap (Part 1 of 2): The Online Idea Phase, we will now recount the 2nd part of the GridDB IoT Hackathon. As noted, the first part of this grand event was an online portion in which the competition was open to anybody who was willing to travel to Bengaluru in the case that they won a position as one of five finalists. You can see the gallery of all submitted participants here: GALLERY DIRECT LINK. From within the gallery you can already see which teams made it to the 2nd, in-person round. The official winners of the hackathon, as determined by the panel of judges were as follows: First Place: Deevia Software (Bengaluru) – Built a GenAI-based Enterprise Document Management Platform. Second Place: Wimera (Bengaluru) – Created an IoT Proof-of-Concept (PoC) for Industrial Machines. Third Place: VitalWatch (Maharashtra) – Developed a Preventive Risk Disease PoC. Fourth Place: Richie Rich (Bengaluru) – Designed a Financial Analytics PoC. Fifth Place: GooRoo Mobility India (Gujarat) – Built a low-cost remote Healthcare Solution PoC. During the finals, teams received direct mentorship and technical support from Toshiba’s GridDB engineers. A member of the winning team, Deevia Software, noted that the GridDB Cloud platform made it extremely easy to efficiently ingest and query time-series data under a tight deadline, allowing them to focus on designing their solution rather than worrying about infrastructure. For the remainder of the article, we will go over in small detail each project; for more details on the event itself, you can read the official press release here: https://toshiba-india.com/pr-toshiba-announces-winners-of-gridDB-cloud-IoT-hackathon-highlighting-industry-ready-real-time%20-solutions-from-across-india.aspx. The Projects Part of what made the hackathon so special was the breadth of the topics in the ideas being submitted. For instance, of the five finalists, 1 was based on generative AI, 2 were based on health care, 1 was based on industrial IoT factory work, and the another was based on the financial sector. I would like to briefly describe each project, and of course, if more information is desired, we encourage all readers to look at the hackathon gallery as it contains all projects’ original submissions. Deevia This project was unique in that it used GridDB in a way not necessarily envisioned by the GridDB team. Rather than focusing on IoT sensor data, Deevia built an AI-powered document management platform using Python, FastAPI, and React, with GridDB as the backbone via JPype. Their core insight was clever: instead of a traditional relational database, they used a container-per-file architecture where each uploaded document gets its own GridDB container, enabling parallel reads and writes without contention. OCR via PaddleOCR extracts text from scanned files, and Llama 3.1 powers semantic search and chat over the resulting knowledge base. They even used GridDB’s built-in partition expiry to handle chat history cleanup — eliminating the need for Redis or cron jobs entirely. Overall, I recommend going and reading their presentation as it was fascinating work. At a high level, the “GenAI-based Enterprise Document Management Platform” means that they can feed documents into their system, use an OCR to convert all of the text into raw text, save those results, and then use GridDB’s raw query speed to very quickly read the text data whenever a user queries the LLM which may need some data from the documents in question. Deevia also used the key-container data architecture to successfully silo off documents from users on a per-need basis (ie, if user A should not have access to certain class of documents, they simply won’t have permissions to read from that container). Overall, I recommend going and reading their presentation as it was fascinating work. Wimera Wimera, while also a strong contender, was on the opposite end of the spectrum — their usecase is exactly the kind of project GridDB was designed for. Built using Python, Node.js, and Angular on top of Azure IoT Hub and Azure Event Hub, the system ingests machine telemetry every few seconds via MQTT/AMQP into GridDB Cloud’s time-series containers. Azure Functions handle both ingestion and KPI aggregation, computing hourly and daily metrics automatically. The result is a fully connected pipeline from machine to cloud to dashboard that gives factory floors real-time visibility into machine status, energy consumption, and production counts — exactly the kind of industrial IoT use case where GridDB shines. VitalWatch VitalWatch, one of the two health submissions, paints an optimistic picture of a future where rural communities can better track and manage the growing risk of diabetes and hypertension. The stack is impressively thorough: wearable sensors transmit readings via Bluetooth to a local gateway, which publishes to an MQTT broker. A Node.js service ingests the data into GridDB Cloud in real time, while a Python service using Pandas, Scikit-learn, and TensorFlow LSTM models runs rolling averages and predictive spike detection. Doctors get Grafana dashboards for trend visualization, and high-risk events trigger SMS alerts via Twilio. Although the presentation focused on the national crisis in India, the project’s impact could truly be worldwide since diabetes is on the rise everywhere. Richie Rich Though perhaps not something immediately obvious when considering GridDB’s typical usage, financial tick data is actually a natural fit for a time-series database. The Richie Rich team built a unified portfolio tracker using FastAPI and Python on the backend with React 18, TypeScript, and Tailwind CSS on the frontend. Price data for stocks, crypto, and commodities is fetched from the CoinGecko API and persisted to GridDB Cloud’s TIME SERIES containers every 20 seconds. An XGBoost classifier trained on historical GridDB data then generates buy/sell/hold recommendations, and GitHub Actions automates weekly CSV portfolio exports for compliance and backtesting. Nifty! GooRoo Mobility India (Gujarat) This project was the other health entry and was also a very strong submission. The team built a real hardware IoT solution using an ESP32 microcontroller paired with a MAX30102 sensor for heart rate and SpO2 and an LM35 for body temperature. The ESP32 transmits readings as JSON over WiFi to a lightweight Python Flask REST API, which validates and stores the data in GridDB Cloud’s time-series containers. A frontend dashboard built in HTML/CSS/JS with Chart.js displays live vitals with color-coded alerts and historical trends. The passion from the team was palpable — they were designing an affordable remote monitoring solution to reduce costly and timely doctor visits in underserved areas, and we are looking forward to what can come of it. Conclusion Once again, we were blown away by the quality and breadth of submissions. What stood out across all five projects was how naturally GridDB’s time-series model fit into domains well beyond traditional IoT — from AI document intelligence to financial analytics to real-time patient monitoring. We highly encourage all readers to explore the full hackathon gallery here: Hackathon

Introduction In large factories, accidents usually do not happen suddenly. They often begin with small changes in machine behavior and travel from one machine to another. For example, if a boiler becomes slightly hotter, it may later cause vibration in another machine connected to the same process. The problem isn’t a lack of sensors, most factories have thousands of them. The real problem is that these sensors don’t talk to each other. Most systems only look at one machine at a time. They miss the “connection” between a hot boiler and a vibrating crusher. Here, we didn’t just build a dashboard to show numbers. We built an Industrial Safety Layer that “connects the dots.” By using GridDB, the system can track how machine conditions change across the factory and identify risks early. Why Time-Series Databases Matter for Industrial IoT Imagine you are looking at a machine’s temperature and it shows 90°C. Is that a problem? If it was 30°C a minute ago and suddenly reached 90°C, the machine may be heading toward a serious failure. If it has been around 90°C for several hours, it may simply be operating within its normal high-temperature cycle. A single sensor reading is just a number. But a sequence of readings over time tells a story. This type of information is known as time-series data. In industrial environments, machines generate thousands of such readings every second. Traditional databases, often used for typical web applications, can struggle when storing and querying large volumes of timestamped data. Time-series databases are designed specifically for this purpose. They work like security footage for machines, allowing engineers to look back at recent sensor readings and understand whether a machine is moving toward a potentially unsafe condition. Why GridDB Cloud for Industrial Monitoring Industrial monitoring systems need a database that can store large amounts of sensor data and allow easy access to recent readings. GridDB Cloud provides an environment where the storage and management of such machine data are handled efficiently, allowing monitoring applications to focus on analyzing system conditions instead of managing database infrastructure. GridDB is a highly scalable, memory-first NoSQL database designed specifically for high-frequency time-series data, making it well-suited for industrial IoT workloads. We use GridDB Cloud to: Store continuous sensor data using time-series containers with timestamp-based row keys. Maintain a fixed schema for predictable and fast reads/writes. Ingest data in memory first and persist it safely to disk. Query recent time windows efficiently for early-warning detection. Correlate multiple sensor streams in real time. Replay sensor data before an alert for incident analysis. Support explainable alerts by allowing fast access to recent sensor history. Without GridDB: Real-time correlation would be slow. High-frequency ingestion would be difficult. Incident replay would be inefficient. System Architecture In this project, we simulate a simple monitoring system for a sugar mill. For simplicity, we consider three machines and monitor them using sensors that track values such as temperature, vibration, and power usage. The sensor data is generated through a simulator and stored in GridDB Cloud. The system then analyzes recent machine data to check if any unusual patterns appear and shows the results on a monitoring dashboard. The overall system consists of the following main components: Sensor Simulation Layer A Python-based simulator generates sensor readings for the machines. It produces values such as temperature, vibration, and power usage at regular intervals to imitate how real industrial sensors send data continuously. Data Ingestion Layer The generated sensor readings are processed by the backend application. This layer receives the simulated data and prepares it for storage in the database. GridDB Cloud Database GridDB Cloud stores the machine sensor data generated during the simulation. It acts as the central data layer from which the application can retrieve recent machine readings when evaluating system conditions. Monitoring Dashboard A web-based dashboard displays the machine status and recent alerts. It visualizes sensor values and helps users observe how machine conditions change during monitoring. Setting Up GridDB Cloud To store machine telemetry data, a GridDB Cloud instance can be deployed through the Microsoft Azure Marketplace. After subscribing to the service, users receive the cluster connection details including the notification provider address, cluster name, database name, and authentication credentials. Using the GridDB Python client, applications connect to the GridDB cluster through the native API using the notification provider address provided in the GridDB Cloud dashboard. The following example shows how a Python application can establish a connection to GridDB Cloud. import griddb_python as griddb import threading _local = threading.local() def get_store(): """Return a per-thread GridDB connection to avoid concurrent access errors.""" if not hasattr(_local, "store") or _local.store is None: factory = griddb.StoreFactory.get_instance() _local.store = factory.get_store( notification_member=NOTIFICATION_MEMBER, cluster_name=CLUSTER_NAME, database="df321dsdJF", username=USERNAME, password=PASSWORD ) return _local.store Once connected, the application can create containers and store the sensor data generated by the monitoring system. Project Overview This project builds a smart safety monitoring system for a sugar mill. The system monitors three key machines in the production process – the Boiler, Crusher, and Centrifugal. Different sensor data such as temperature, vibration, and power usage is generated and stored, where it can be analyzed to observe how machine behavior changes over time. Key ideas behind the system: System-wide monitoring: Instead of analyzing machines separately, the system observes how multiple machines behave together. Pattern detection: It identifies small but meaningful changes in sensor values that may signal a developing issue. Failure propagation awareness: It models how a problem in one machine can affect other machines in the process. Simulating Industrial Sensor Data Since real industrial sensors were not available, the system uses a sensor simulator to generate realistic machine telemetry. The simulator creates readings for three machines in a sugar mill: Boiler: Measures temperature, vibration, and power usage. Crusher: Measures vibration, temperature, and power usage. Centrifugal: Measures vibration, temperature, and power usage. To make the simulation more realistic, the dataset is generated in three phases: Normal: All machines operate within safe limits. Escalation: The boiler begins to overheat, indicating a developing issue. Cascade: The boiler failure spreads to the crusher and then the centrifugal, simulating a chain reaction. This models how failures in industrial environments often propagate through connected machines rather than occurring in isolation. To make the simulation mathematically sound and physically realistic, the system avoids generating flat, hardcoded values. Instead, it uses proportional interpolation and random noise to calculate sensor values dynamically: import random def interpolate(low, high, progress=None): """Smoothly interpolate between two values with optional random progress.""" if progress is None: progress = random.uniform(0.0, 1.0) return low + progress * (high – low) def make_row(ts, machine_id, target_state): """Build a realistic sensor row using interpolation instead of hardcoded offsets.""" t_nrm_low, t_nrm_high = MACHINES[machine_id]["normal"]["temperature"] t_warn = MACHINES[machine_id]["thresholds"]["temperature"]["warning"] # Add realistic environmental noise noise_t = random.uniform(-1.5, 1.5) if target_state == "Normal": # Calculate normal resting value + physical jitter return [ts, interpolate(t_nrm_low, t_nrm_high) + noise_t, …] elif target_state == "Warning": # Proportionally ramp upward toward the warning threshold prog = random.uniform(0.1, 0.6) return [ts, interpolate(t_nrm_high, t_warn, prog) + noise_t, …] This approach guarantees that simulated sensors drift naturally within a range (and occasionally spike) rather than resting rigidly at a static median. The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring Live Escalation Simulation To demonstrate how failures develop over time, the project also includes a small Python script that simulates a live escalation scenario in the sugar mill. Instead of inserting a full dataset at once, the script gradually sends sensor readings to GridDB Cloud to mimic how problems evolve in real industrial systems. The simulation follows three stages: Normal Operation – All machines operate within safe limits. Boiler Escalation – The boiler temperature gradually increases toward critical levels. Cascade Failure – Instability propagates from the boiler to the crusher and eventually to the centrifugal machine. During the simulation, new sensor readings are continuously inserted into GridDB Cloud. This allows the monitoring system and dashboard to react in real time as the cascade develops. A simplified example of the escalation trigger is shown below: def trigger_cascade(): """Simulates a live cascade that persists until resolved in the dashboard.""" store = insert_data.get_gridstore() start_sim() i = 0 while is_sim_active(): ts = datetime.now(timezone.utc) batch = {} for machine_id in MACHINES: # Dynamically generate realistic telemetry row = make_row(ts, machine_id, determine_phase(i, machine_id)) batch[MACHINES[machine_id]["container"]] = [row] store.multi_put(batch) i += 1 time.sleep(1) The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring When the user clicks the “Issue Addressed” button in the dashboard, the backend disables the simulation flag. This causes the escalation loop to stop, and the script injects a final batch of normal sensor readings into GridDB Cloud. These healthy readings immediately restore the system to a stable state on the monitoring dashboard. Storing Sensor Data in GridDB Cloud After generating the simulated sensor readings, the data is stored in GridDB Cloud so it can be accessed by the monitoring system. Each machine is assigned its own TIME_SERIES container, where readings such as timestamp, temperature, vibration, and power consumption are stored. The timestamp acts as the key for each record, allowing the system to store and query machine telemetry in chronological order. Once the containers are created, the generated sensor dataset is inserted into GridDB so it can be used for monitoring and analysis. Creating a TIME_SERIES container def create_container(store, container_name): con_info = griddb.ContainerInfo( container_name, [ ["timestamp", griddb.Type.TIMESTAMP], ["temperature", griddb.Type.DOUBLE], ["vibration", griddb.Type.DOUBLE], ["power", griddb.Type.DOUBLE], ], griddb.ContainerType.TIME_SERIES ) return store.put_container(con_info) The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring Inserting Data into GridDB Cloud from datetime import datetime def insert_data(store, dataset): batch = {} for machine_id, readings in dataset.items(): container_name = MACHINES[machine_id]["container"] rows = [] for r in readings: ts = datetime.fromisoformat(r["timestamp"].replace("Z", "+00:00")) rows.append([ts, r["temperature"], r["vibration"], r["power"]]) batch[container_name] = rows store.multi_put(batch) The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring The multi_put operation allows rows for multiple containers to be written in a single request. This significantly improves ingestion efficiency when handling continuous machine telemetry streams. Continuous Telemetry Stream In addition to inserting the initial dataset, the system also supports a background data producer that continuously sends normal machine telemetry to GridDB Cloud. This producer acts as a heartbeat, ensuring that the monitoring dashboard always receives fresh sensor data. When the escalation simulation is triggered, the producer automatically pauses to avoid overwriting the simulated failure sequence. Once the simulation ends, the producer resumes sending healthy readings, allowing the system to recover naturally. Querying Sensor Data and Detecting Pre-Incident Conditions Once sensor data is stored in GridDB Cloud, the monitoring system queries recent readings to evaluate machine conditions. The system retrieves the latest records for each machine and analyzes whether sensor values are approaching unsafe ranges. GridDB’s Time-Series Query Language (TQL) allows the system to efficiently fetch the most recent sensor readings from each machine container. def query_recent(store, machine_id, limit=20): container = store.get_container(MACHINES[machine_id]["container"]) query = container.query(f"select * order by timestamp desc limit {limit}") rs = query.fetch() readings = [] while rs.has_next(): row = rs.next() readings.append({ "temperature": row[1], "vibration": row[2], "power": row[3], }) return readings The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring To avoid sudden status flickering near thresholds, the system evaluates machine conditions using a small rolling window of recent readings. By averaging the latest values, the monitoring logic becomes more stable and resistant to temporary sensor noise. def machine_risk_score(machine_id, avg_t, avg_v, avg_p): """Calculates a 0–100 risk score based on continuous sensor severity.""" s_t = sensor_severity(avg_t, nrm["temperature"][1], thr["temperature"]["warning"], thr["temperature"]["critical"]) s_v = sensor_severity(avg_v, nrm["vibration"][1], thr["vibration"]["warning"], thr["vibration"]["critical"]) s_p = sensor_severity(avg_p, nrm["power"][1], thr["power"]["warning"], thr["power"]["critical"]) weighted = ( s_t * SENSOR_WEIGHTS["temperature"] + s_v * SENSOR_WEIGHTS["vibration"] + s_p * SENSOR_WEIGHTS["power"] ) return min(round(weighted * 100), 100) The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring Risk Scoring Instead of a simple status label, each machine also receives a continuous risk score (0–100) based on exactly how far its sensor values have drifted from safe ranges. For example, a boiler at 89°C and a boiler at 200°C both count as “Critical” in a simple system. With continuous scoring, the second scenario scores significantly higher because it is far more dangerous. The fleet-wide score is a weighted average across all machines — the boiler contributes more to the overall score because a boiler failure affects everything downstream. Active cascade conditions add extra penalty points on top. Cascade Detection The system understands that machines in a sugar mill are connected. If the boiler fails, the crusher that depends on its steam will eventually be affected too. These relationships are defined as rules: CASCADE_RULES = [ {"source": "boiler", "target": "crusher", "message": "Boiler instability propagating to Crusher…"}, {"source": "crusher", "target": "centrifugal", "message": "Crusher anomaly affecting Centrifugal…"}, {"source": "boiler", "target": "centrifugal", "message": "Full-Chain Cascade detected…"}, ] When a source machine is in a dangerous state and its downstream target also shows elevated readings, a cascade alert is triggered on the dashboard. Building the Monitoring Dashboard Visualization plays an important role in the monitoring system. To make machine conditions easier to understand, a web-based dashboard was created that displays machine status, sensor readings, cascade alerts, and the overall system risk score in real time. The interface is built using HTML, JavaScript, and Chart.js, which provides interactive charts for visualizing machine telemetry. The backend exposes a set of simple API endpoints that the dashboard polls periodically to retrieve updated monitoring data. Endpoint What it returns GET /api/fleet (Main) Status of all machines + cascade alerts + risk score GET /api/machine/ Sensor history for a single machine (used for charts) GET /api/timeline Log of escalation events over time Below is a simplified example from the dashboard script showing how the system fetches fleet data and updates the interface. async function pollFleet() { try { const res = await fetch('/api/fleet'); const data = await res.json(); // Update each machine card for (const [machineId, status] of Object.entries(data.machines)) { setStatus(machineId, status.status, status.message, status.latest); } renderCascades(data.cascades || []); updateRiskScore(data.risk_score ?? 0); } catch(e) { console.error('Fleet poll failed', e); } } The complete implementation can be found in the project repository: github.com/ritigya03/GridDB-Industrial-Safety-Monitoring The dashboard calls the fleet endpoint every few seconds to keep the interface synchronized with the latest machine data stored in GridDB Cloud. Additional endpoints provide sensor history and timeline events, which are used to render trend charts and escalation timelines for operators. Running the Project Make sure GridDB Cloud is running and credentials are configured as environment variables. Then run the following in order: # Step 1: Create containers and seed historical data $ python src/insert_data.py # Step 2: Start the monitoring backend $ python src/app.py # Step 3 (optional): Keep dashboard alive with a live heartbeat $ python src/insert_data.py –live # Step 4 (optional): Trigger a live cascade demo $ python src/simulate_escalation.py Open http://localhost:5000 to view the dashboard. To make the demonstration clear and repeatable, the project uses two separate data-feeding mechanisms: The Background Heartbeat (insert_data.py –live): This script provides a stable, healthy baseline so the dashboard stays active and “green” by default. The Manual Escalation (simulate_escalation.py): This script temporarily “takes over” the dashboard with a developing incident to show how failures propagate. One of the key advantages of using GridDB is how it handles automatic recovery. Once the escalation script finishes, the background producer continues to send “Normal” data. These new healthy readings eventually “drown out” any temporary critical spikes, and the system automatically returns to a safe state without requiring a manual reset. Results and Dashboard Overview After running the system, the monitoring dashboard displays the real-time status of all machines in the sugar mill simulation. The interface shows sensor readings for temperature, vibration, and power consumption, along with the current safety status of each machine. Normal Operation In the normal state, all machines operate within safe limits. The dashboard displays a low overall risk score and indicates that the factory is running under normal conditions. As sensor values begin drifting toward warning thresholds, the system detects early warning patterns. Individual machines may enter a Warning state, and the overall risk score begins to increase. When multiple sensors exceed warning thresholds simultaneously, the system identifies a Pre-Incident condition. If instability spreads between machines, the dashboard highlights cascade alerts to indicate a potential chain reaction across the production process. Once the issue is addressed and sensor readings return to safe ranges, the monitoring system automatically returns to a stable state. The dashboard updates in real time, clearing cascade alerts and lowering the system risk score. By visualizing machine telemetry, cascade alerts, and system risk levels together, the dashboard provides operators with a clear operational overview of the factory. This helps engineers detect early warning patterns and respond before issues escalate into serious failures. Conclusion Industrial accidents often develop gradually through small changes in machine behavior rather than sudden failures. Detecting these early signals requires systems that can store large volumes of sensor data and analyze how machine conditions evolve over time. In this project, we built a simple safety monitoring prototype using GridDB Cloud to store and query machine telemetry data. By combining simulated sensor readings with rule-based analysis, the system can detect early warning patterns and potential cascade failures across machines. In the future, this approach could be extended with machine learning models trained on historical sensor data in GridDB, enabling more advanced predictive maintenance and anomaly

With the advent of Large Language Models (LLMs), chatbot applications have become increasingly common, enabling more natural and intelligent interactions with data. In this article, you will see how to build a stock market chatbot using LangGraph, OpenAI API, and GridDB cloud. We will retrieve historical Apple stock price data from Yahoo Finance using the yfinance library, insert it into a GridDB container, and then query it using a chatbot built with LangGraph that utilizes the OpenAI GPT -4 model. GridDB is a high-performance time-series database designed for massive real-time workloads. Its support for structured containers, built-in compression, and lightning-fast reads and writes makes it ideal for storing and querying time series data such as stock market prices. Installing and Importing Required Libraries !pip install -q yfinance !pip install langchain !pip install langchain-core !pip install langchain-community !pip install langgraph !pip install langchain_huggingface !pip install tabulate !pip uninstall -y pydantic !pip install –no-cache-dir “pydantic>=2.11,<3” import yfinance as yf import pandas as pd import json import datetime as dt import base64 import requests import numpy as np from langchain_core.prompts import ChatPromptTemplate from langchain_openai import ChatOpenAI from langchain_core.output_parsers import StrOutputParser from langgraph.graph import START, END, StateGraph from langchain_core.messages import HumanMessage from langgraph.checkpoint.memory import MemorySaver from langchain_experimental.agents import create_pandas_dataframe_agent from langchain_openai import OpenAI from langchain.agents.agent_types import AgentType from typing_extensions import List, TypedDict from pydantic import BaseModel, Field from IPython.display import Image, display Inserting and Retrieving Stock Market Data From GridDB We will first import data from Yahoo Finance into a Python application. Next, we will insert this data into a GridDB container and will retrieve it. Importing Data from Yahoo Finance The yfinance Python library allows you to import data from Yahoo Finance. You need to pass the ticker name, as well as the start and end dates, for the data you want to download. The following script downloads the Apple stock price data for the year 2024. import yfinance as yf import pandas as pd ticker = “AAPL” start_date = “2024-01-01” end_date = “2024-12-31” dataset = yf.download(ticker, start=start_date, end=end_date, auto_adjust=False) ─────────────────────────────────────────────────────────────── 1. FLATTEN: keep the level that holds ‘Close’, ‘High’, … ─────────────────────────────────────────────────────────────── if isinstance(dataset.columns, pd.MultiIndex): find the level index where ‘Close’ lives for lvl in range(dataset.columns.nlevels): level_vals = dataset.columns.get_level_values(lvl) if ‘Close’ in level_vals: dataset.columns = level_vals # keep that level break else: already flat – nothing to do pass ─────────────────────────────────────────────────────────────── 2. Select OHLCV, move index to ‘Date’ ─────────────────────────────────────────────────────────────── dataset = dataset[[‘Close’, ‘High’, ‘Low’, ‘Open’, ‘Volume’]] dataset = dataset.reset_index().rename(columns={‘index’: ‘Date’}) dataset[‘Date’] = pd.to_datetime(dataset[‘Date’]) optional: reorder columns dataset = dataset[[‘Date’, ‘Close’, ‘High’, ‘Low’, ‘Open’, ‘Volume’]] dataset.columns.name = None dataset.head() Output: The above output indicates that the dataset comprises the daily closing, opening, high, low, and volume prices for Apple stock. In the section, you will see how to insert this data into a GridDB cloud container. Establishing a Connection with GridDB Cloud After you create your GridDB cloud account and complete configuration settings, you can run the following script to see if you can access your database within a Python application. username = “your_user_name” password = “your_password” base_url = “your_griddb_host_url” url = f”{base_url}/checkConnection” credentials = f”{username}:{password}” encoded_credentials = base64.b64encode(credentials.encode()).decode() headers = { ‘Content-Type’: ‘application/json’, # Added this header to specify JSON content ‘Authorization’: f’Basic {encoded_credentials}’, ‘User-Agent’: ‘PostmanRuntime/7.29.0’ } response = requests.get(url, headers=headers) print(response.status_code) print(response.text) Output: 200 The above output indicates that you have successfully connected with your GridDB cloud host. Creating a Container for Inserting Stock Market Data in GridDB Cloud Next, we will insert the Yahoo Finance into GridDB. To do so, we will add another column, SerialNo which contains unique keys for each data row, as GridDB expects a unique key column in the dataset. Next, we will map Pandas dataframe column types to Gridb data types. dataset.insert(0, “SerialNo”, dataset.index + 1) dataset[‘Date’] = pd.to_datetime(dataset[‘Date’]).dt.strftime(‘%Y-%m-%d’) # “2024-01-02” dataset.columns.name = None Mapping pandas dtypes to GridDB types type_mapping = { “int64”: “LONG”, “float64”: “DOUBLE”, “bool”: “BOOL”, ‘datetime64’: “TIMESTAMP”, “object”: “STRING”, “category”: “STRING”, } Generate the columns part of the payload dynamically columns = [] for col, dtype in dataset.dtypes.items(): griddb_type = type_mapping.get(str(dtype), “STRING”) # Default to STRING if unknown columns.append({ “name”: col, “type”: griddb_type }) columns Output: [{‘name’: ‘SerialNo’, ‘type’: ‘LONG’}, {‘name’: ‘Date’, ‘type’: ‘STRING’}, {‘name’: ‘Close’, ‘type’: ‘DOUBLE’}, {‘name’: ‘High’, ‘type’: ‘DOUBLE’}, {‘name’: ‘Low’, ‘type’: ‘DOUBLE’}, {‘name’: ‘Open’, ‘type’: ‘DOUBLE’}, {‘name’: ‘Volume’, ‘type’: ‘LONG’}] The above output displays the dataset column names and their corresponding GridDB-compliant data types. The next step is to create a GridDB container. To do so, you need to pass the container name, container type, and a list of column names and their data types. url = f”{base_url}/containers” container_name = “stock_db” Create the payload for the POST request payload = json.dumps({ “container_name”: container_name, “container_type”: “COLLECTION”, “rowkey”: True, # Assuming the first column as rowkey “columns”: columns }) Make the POST request to create the container response = requests.post(url, headers=headers, data=payload) Print the response print(f”Status Code: {response.status_code}”) Adding Stock Data to GridbDB Cloud Container Once you have created a container, you must convert the data from your Pandas dataframe into the JSON format and call a put request to insert data into GridDB. url = f”{base_url}/containers/{container_name}/rows” Convert dataset to list of lists (row-wise) with proper formatting def format_row(row): formatted = [] for item in row: if pd.isna(item): formatted.append(None) # Convert NaN to None elif isinstance(item, bool): formatted.append(str(item).lower()) # Convert True/False to true/false elif isinstance(item, (int, float)): formatted.append(item) # Keep integers and floats as they are else: formatted.append(str(item)) # Convert other types to string return formatted Prepare rows with correct formatting rows = [format_row(row) for row in dataset.values.tolist()] Create payload as a JSON string payload = json.dumps(rows) Make the PUT request to add the rows to the container response = requests.put(url, headers=headers, data=payload) Print the response print(f”Status Code: {response.status_code}”) print(f”Response Text: {response.text}”) Output: Status Code: 200 Response Text: {“count”:251} If you see the above response, you have successfully inserted the data. Retrieving Data from GridDB After inserting the data, you can perform a variety of operations on the dataset. Let’s see how to import data from a GridDB container into a Pandas dataframe. container_name = “stock_db” url = f”{base_url}/containers/{container_name}/rows” Define the payload for the query payload = json.dumps({ “offset”: 0, # Start from the first row “limit”: 10000, # Limit the number of rows returned “condition”: “”, # No filtering condition (you can customize it) “sort”: “” # No sorting (you can customize it) }) Make the POST request to read data from the container response = requests.post(url, headers=headers, data=payload) Check response status and print output print(f”Status Code: {response.status_code}”) if response.status_code == 200: try: data = response.json() print(“Data retrieved successfully!”) Convert the response to a DataFrame rows = data.get(“rows”, []) stock_dataset = pd.DataFrame(rows, columns=[col for col in dataset.columns]) except json.JSONDecodeError: print(“Error: Failed to decode JSON response.”) else: print(f”Error: Failed to query data from the container. Response: {response.text}”) print(stock_dataset.shape) stock_dataset.head() Output: The above output shows the data retrieved from the GridDB container. We store the data in a Pandas dataframe. You can store the data in any other format if you want. Once you have the data, you can create a variety of AI and data science applications. Creating a Stock Market Chatbot Using GridDB Data In this next section, you will see how to create a simple chatbot in LangGraph framework, which calls the OpenAI API to answer your questions about the Apple stock price you just retrieved from the GridDB. Creating a Graph in LangGraph To create a Graph in LangGraph, you need to define its state. A graph’s state contains attributes that are shared between multiple graph nodes. Since we only need to store questions and answers, we create the following graph state. class State(TypedDict): question: str answer: str We will use the create_pandas_dataframe_agent from LangChain to answer our questions since we retrieved data from Gridb into a Pandas dataframe. We will create the agent object and will call it inside the run_llm() function we define. We will use this function in our LangGraph node. api_key = “YOUR_OPENAI_API_KEY” llm = ChatOpenAI(model = ‘gpt-4o’, api_key = api_key) agent = create_pandas_dataframe_agent(llm, stock_dataset, verbose=True, agent_type=AgentType.ZERO_SHOT_REACT_DESCRIPTION, allow_dangerous_code=True) def run_llm(state: State): question = state[‘question’] response = agent.invoke(question) return {‘answer’: response[‘output’]} Finally, we define the graph for our chatbot. The graph consists of only one node, ask_question, which calls the run_llm() function. Inside the function, we call the create_pandas_dataframe_agent(), which answers questions about the dataset. graph_builder=StateGraph(State) graph_builder.add_node(“ask_question”, run_llm) graph_builder.add_edge(START,”ask_question”) graph_builder.add_edge(“ask_question”,END) graph = graph_builder.compile() display(Image(graph.get_graph().draw_mermaid_png())) Output: The above output shows the flow of our graph. Asking Questions Let’s test our chatbot by asking some questions. We will first ask our chatbot about the month that had the highest average opening price—also, the month where people made the most profit in day trading. question = [HumanMessage(content=”Which month had the highest average opening stock price? And what is the month where people made most profit in day trading?”)] result = graph.invoke({“question”: question}) print(result[‘answer’]) Output: The output above shows the chatbot’s response. That is correct; I verified it manually using a Python script. Let’s ask it to be more creative and see if it finds any interesting patterns in the dataset. question = [HumanMessage(content=”Do you find any interesting patterns in the dataset?”)] result = graph.invoke({“question”: question}) print(result[‘answer’]) Output: The above output shows the first part of the reply. You can see that the chatbot is intelligent enough to draw a plot for the closing prices to identify interesting patterns. The following output shows some interesting observations from the dataset. Output: The article demonstrates how to create an OpenAI API-based chatbot that answers questions related to data retrieved from GridDB. If you have any questions or need help with GridDB cloud, you can leave your query on Stack Overflow using the griddb tag. Our team will be happy to answer it. For the complete code of this article, visit my GridDB Blogs GitHub