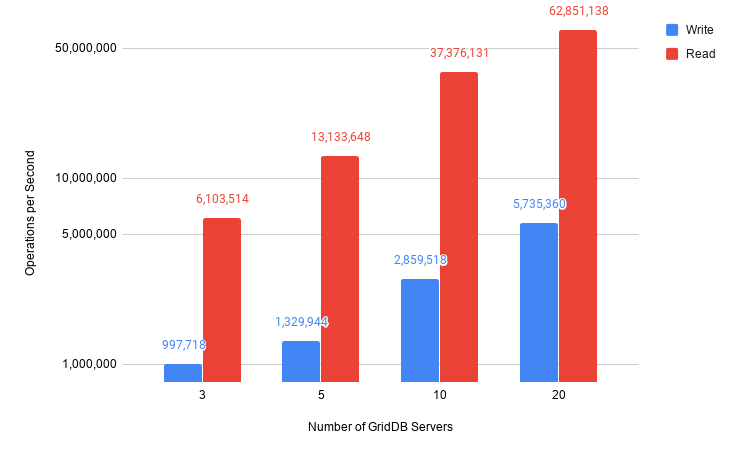

Here we look at how we were able to achieve 5 million writes per second & 60 million reads per second with only 20 GridDB nodes on Google Cloud.

Introduction GridDB is a super fast database for IoT and Big Data applications developed by Toshiba Digital Solutions Corporation. It has a unique

key-container data model that is ideal for storing sensor data, a memory first, storage second architecture that provides incredible performance, and it easily scales out to up to 1,000 nodes. For use of GridDB in the cloud, please take a look at the GridDB Cloud Website Several databases have published reports over the last few years, including Cassandra, Aerospike, and Couchbase in which they share that were able to sustain one million writes-per-second running on public cloud services. We (Fixstars) and the GridDB team took that as a challenge and decided to see how much further we could scale GridDB and drive down the performance-per-dollar. While these tests showcase GridDB’s excellent performance on Google Cloud Platform, they also showcase best practices when designing a GridDB IoT, IIoT, and other applications that require high velocity write performance.

Cloud Configuration

Google Cloud Platform was used to run the benchmark. We used the default, boot-persistent n1-standard-8 instance types with a 375GB SSD each and with n1-standard-8 instances eight vCPUs and 30GB of memory published per instance pricing was 0.042/hour. A 1:1 ratio of server and client instances were used: for example, for 3 servers, 3 clients were used and for 5 servers, 5 clients were used and so on. From prior experience, we knew that the sorts of GridDB workloads that we had planned would be I/O bound and that GCP’s SSD offered the best performance value ratio. The fast storage combined with GCP’s Jupiter networking fabric that offers faster 16 gigabit network egress means that GCP is an ideal platform for GridDB allowing us to achieve near linear scalability from three to twenty server instances.

Client Software A new benchmark client was written in Java with maximum performance in mind. The client would read or write to one container per thread per process eliminating any lock contention. Like the other databases who have published 1 Million Writes successes, each record was 200 bytes and 100M records were inserted and read for each trial.

GridDB Configuration GridDB’s configuration was changed from the default parameters with the following settings:

- storeMemoryLimit: 20480MB, checkpointMemoryLimit: 4096MB (Use as much memory for GridDB as possible leaving just enough memory for operating system and other required applications. )

- concurrency: 8 (One worker thread per CPU core.)

- storeCompressionMode: COMPRESSION (Reduce disk I/O.)

- replicationNum: 3 (Each piece of data is stored on three instances.)

Results With just 3 Google Cloud nodes, GridDB was close to completing 1 million writes per second with 897,718 operations per second. Performance was able to scale linearly as servers were added, with 20 servers being able to achieve our goal of 5 million writes-per-second.

GridDB Performance using Google Cloud Platform

Three GridDB servers are able to perform just over 6 million reads per second while 20 servers performed over 60 million reads per second.

Three GridDB servers are able to perform just over 6 million reads per second while 20 servers performed over 60 million reads per second.

Operations Per Second Each GridDB server was able to perform at least 250,000 writes per second and read at least 2 million records per second. This consistent performance makes GridDB ideal for applications where data is ingested continuously at high volumes such as Industry 4.0, financial markets, and both Consumer and Industrial IoT.

Latency Like YCSB, our benchmark tool doesn’t directly measure latency, instead it calculates median latency per host using the formula latency = 1 / operations per second, which is the average time each operation takes. Low latency is imperative for real-time analytics, monitoring, and visualization applications where having the most recent data available ensures that optimal business decisions are made.

Cost Metrics A single server used for these tests costs $0.42 per hour. A small budget of only $1,500 per month is required to achieve 1M writes per second and scale to $6,000 per month for 5M writes per second meaning better cost efficiency with larger workloads. Absolute costs per 100M reads or writes provide longevity as costs per user or device do not escalate as your business grows. It must be reiterated that 1M writes per second costs only $2.10 per hour while 5M writes per second costs only $8.40 per hour. Reads are even less expensive, with 5M reads per second costing $1.26 per hour and over 50M reads per second costing $8.40 per hour.

Conclusion As expected, GridDB was easily able to achieve 1 million writes per second with four servers and then scaled linearly to 5 million writes per second with 20 servers. Read performance was also very good with over 6 million reads per second with 3 servers and over 60 million reads per second with 20 servers. These results show the cost effectiveness of GridDB on Google Cloud, requiring significantly less computational and storage resources than other databases. The source code for the utility used to generate the workload in the above results is available

here. A printable PDF is available here.

If you have any questions about the blog, please create a Stack Overflow post here https://stackoverflow.com/questions/ask?tags=griddb .

Make sure that you use the “griddb” tag so our engineers can quickly reply to your questions.